Is AI Reading Your Website Without You Knowing?

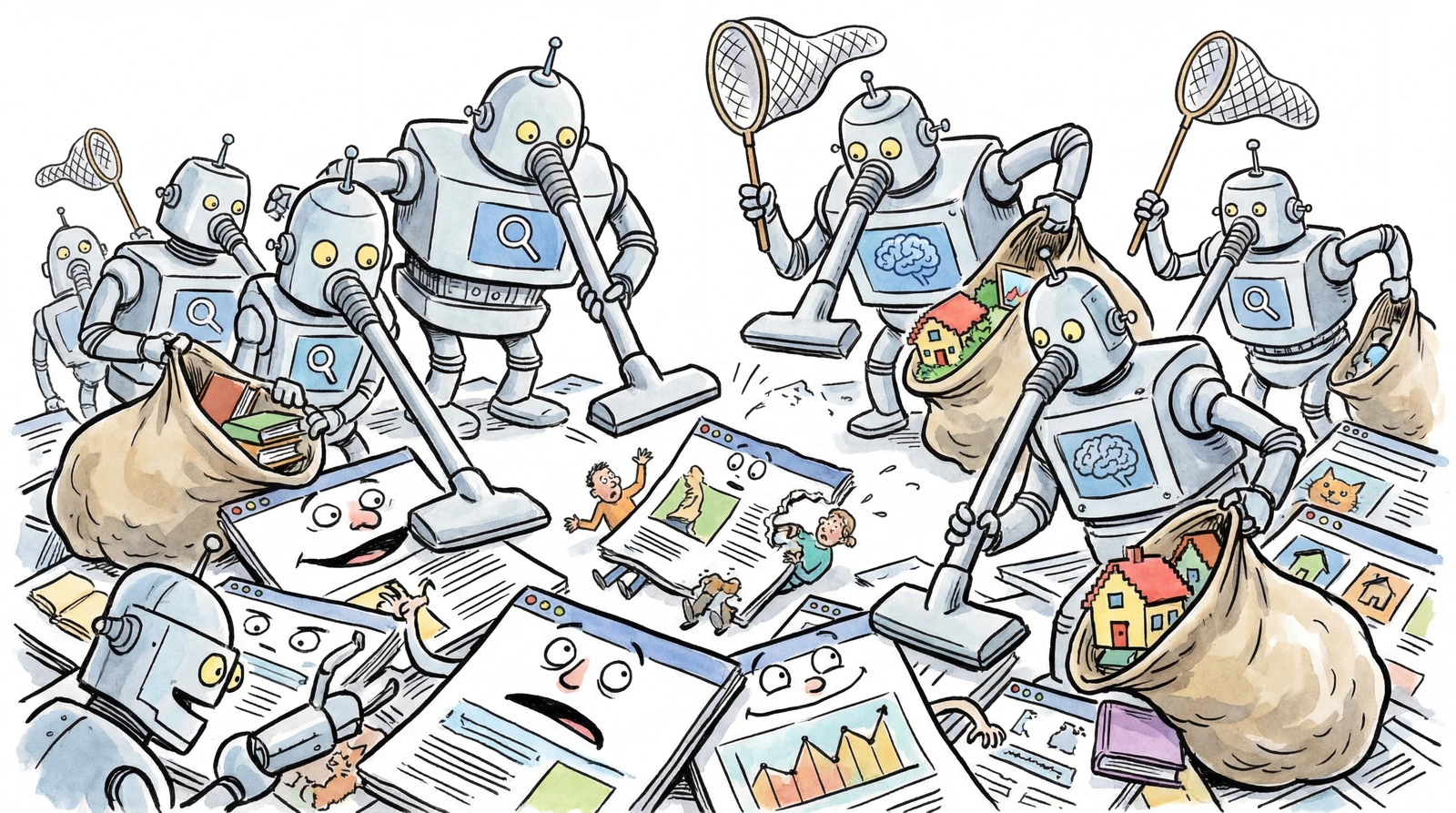

When you search on Google, your website shows up — right? That's because Google sends an automated program (called a bot) to visit your site and read its content ahead of time. This process of bots traveling across the web to collect information is called crawling.

But today, it's not just search engines doing this. AI systems like ChatGPT, Gemini, and Claude are visiting websites too — collecting your content without ever asking permission.

In this guide, we'll walk through what crawling actually is, how to control it with three key tools, and how to flip the script — making AI more likely to cite your content through a strategy called GEO.

listContentsexpand_more

- 1. What Is Web Crawling?

- 1-1. How Search Engine Crawling Works

- 1-2. How AI Bot Crawling Is Different

- 2. Three Tools to Control Crawling

- 2-1. robots.txt: The Entry Rules

- 2-2. ai.txt: Your AI Usage Policy

- 2-3. llms.txt: A Table of Contents for AI

- 3. How the Three Files Compare

- 4. Are These Rules Actually Followed?

- 4-1. Who Follows the Rules — and Who Doesn't?

- 4-2. Where Does Legal Protection Stand?

- 4-3. So What Should You Actually Do?

- 5. Has AI Already Scraped Your Site? 5 Ways to Check

- ① Server Log (Access Log) Analysis

- ② Ask the AI Directly

- ③ Online Crawler Access Checkers

- ④ Search Public Training Datasets

- ⑤ Behavioral Bot Detection

- 6. Practical Checklist

- Step 1: Define Your AI Content Policy

- Step 2: Create and Upload ai.txt

- Step 3: Create and Upload llms.txt

- Step 4: Set Up a Regular Update Process

- 7. GEO (Generative Engine Optimization) Basics

- 7-1. What Is GEO?

- 7-2. SEO vs. GEO: Core Differences

- 7-3. How AI Evaluates “Good Content”

- 7-4. Core GEO Strategies

- Today's Takeaways

1. What Is Web Crawling?

Crawling is the process by which search engines and AI bots automatically visit websites, read the content on each page, and store it.

NOTE

Why does crawling exist in the first place?

Just because you build a website doesn't mean it automatically appears on Google. Think of it like a librarian searching for new books to add to the catalog.

- There are billions of web pages out there, but search engines have no way of knowing they exist on their own.

- So search engines send out automated programs — called crawlers or bots — to visit websites and read their content.

- That content gets saved in an index, like a library's card catalog.

- When someone searches, the most relevant pages from that index are surfaced.

In short: A page that hasn't been crawled = a book with no catalog entry = a page no one can find.

1-1. How Search Engine Crawling Works

-

Discovery: Search engine bots (like Googlebot) follow links to find new pages

-

Crawling: They read the page's HTML, text, images, and other content

-

Indexing: That content is analyzed and stored in a searchable format

-

Ranking: When someone searches, relevant pages are ranked and displayed

1-2. How AI Bot Crawling Is Different

Generative AI systems like ChatGPT, Gemini, and Perplexity use web content in a fundamentally different way than traditional search engines.

| Category | Search Engines | Generative AI |

|---|---|---|

| Purpose | Index pages → serve a list of links | Learn from / summarize content → generate direct answers |

| User behavior | Click a result → visit the site | Get the answer from AI → may never visit the site |

| Bot types | Googlebot, Bingbot, etc. | GPTBot, ClaudeBot, Google-Extended, etc. |

| Traffic trend | Steady | Growing rapidly year over year |

NOTE

KNOW — Why does this matter?

Website owners now need to answer a new question: “Should AI be allowed to use my content for training?”

The EU has already started legally protecting the right of copyright holders to opt out — to explicitly say “don't use my content for AI training.” That trend is spreading globally.

2. Three Tools to Control Crawling

2-1. robots.txt: The Entry Rules

-

Location:

https://yourdomain.com/robots.txt -

Target: Search engines, general crawlers, some AI bots

-

Role: Defines who is allowed in — and how far they can go

-

Standard: One of the oldest web standards; widely adopted

Basic syntax:

User-agent: * # Applies to all crawlers

Allow: /blog/ # Allow crawling of /blog/

Disallow: /admin/ # Block crawling of /admin/

User-agent: GPTBot # Specific rules for OpenAI's bot

Disallow: / # Block GPTBot entirely

Sitemap: https://example.com/sitemap.xml

Key points:

-

You can set rules per bot (GPTBot, ClaudeBot, Google-Extended, etc.)

-

Disallow: /→ blocks that bot from crawling your entire site -

Allow: /→ grants full access -

This is the most foundational tool for crawl control

2-2. ai.txt: Your AI Usage Policy

-

Location:

https://yourdomain.com/ai.txt -

Target: AI crawlers and AI agents

-

Role: Explains what AI systems are — and aren't — allowed to do with your content

-

Standard: Still in the proposal/experimental stage — not yet an official standard

What ai.txt lets you control:

- Permitted vs. restricted content

- Public blog, help docs → allow AI summarization, search, Q&A

- Paid courses, members-only content → block from AI training or summarization

- Preferred data paths

- Use

Sitemap,Data-Format,API-Searchto guide AI toward the most efficient reading paths

- Policy statement

- Human-readable AI usage principles (for legal and brand alignment)

Example:

# ai.txt for example.com

# Last-Updated: 2026-03-26

User-Agent: *

Allow: /blog/

Allow: /docs/

Allow: /help/

Disallow: /members/

Disallow: /billing/

Sitemap: https://example.com/sitemap.xml

# Policy:

# - Public blog, docs, and help center pages MAY be used for

# indexing, search, summarization, and Q&A answering.

# - Member-only pages and user data MUST NOT be used for

# model training or AI-generated answers.

Sites where ai.txt matters most:

-

Sites with premium or professional content (courses, reports, etc.)

-

Community platforms where users generate most of the content

-

High legal/compliance risk areas (finance, healthcare, education, public sector)

2-3. llms.txt: A Table of Contents for AI

-

Location:

https://yourdomain.com/llms.txt -

Target: LLMs (like ChatGPT) when generating answers

-

Role: A structured index that tells AI which documents to read first when answering questions about your site

-

Format: Markdown

-

Standard: Proposed by data scientist Jeremy Howard at llmstxt.org — unofficial but spreading fast

Structure of llms.txt:

-

#H1 title (your site or service name) -

>Blockquote with a 2–3 line summary -

Regular paragraph with a service description

-

##Sections with links to your key documents

Example:

# KnowAI

> KnowAI is an AI education and consulting platform.

> This file helps LLMs find the most important resources

> and answer questions accurately.

Last-Updated: 2026-03-26

## Docs

- [Getting Started](https://knowai.com/docs/start): Overview and how to begin

- [Features](https://knowai.com/docs/features): Core feature descriptions

- [FAQ](https://knowai.com/docs/faq): Frequently asked questions

## Blog

- [All Articles](https://knowai.com/blog): AI tips and trends

## Policies

- [Terms of Service](https://knowai.com/terms)

- [Privacy Policy](https://knowai.com/privacy)

llms.txt checklist:

-

Includes an H1 title

-

Includes a blockquote summary

-

Key document links are organized in list format

-

All URLs are live (no 404s)

3. How the Three Files Compare

| Category | robots.txt | ai.txt | llms.txt |

|---|---|---|---|

| Analogy | 🚧 Entry rules | 📋 Usage policy | 📖 AI site guide |

| Primary audience | Search engine bots, some AI bots | AI crawlers only | LLMs (during answer generation) |

| Purpose | Allow or block bot access | Define what AI can/can't do with your content | Provide a prioritized reading list for AI |

| Format | Text, rule-based | Text, rules + metadata | Markdown |

| Standardization | ✅ Established web standard | ⚠️ Experimental | ⚠️ Unofficial (gaining traction fast) |

4. Are These Rules Actually Followed?

All three of these files rely on voluntary compliance. They're not technical barriers — they're more like posting a sign that says “these are our house rules.”

CAUTION

In 2025, a U.S. court (Ziff Davis v. OpenAI) had this to say about robots.txt:

“A robots.txt file is like a ‘No Trespassing’ sign posted on your lawn. It's not a lock on the door — it's a request that visitors choose to respect.”

In other words, if an AI bot ignores that request, current U.S. law makes it difficult to treat that as hacking or a DMCA violation.

4-1. Who Follows the Rules — and Who Doesn't?

| Category | Generally Compliant | Known Violators |

|---|---|---|

| Key players | Major companies — Googlebot, GPTBot (OpenAI), ClaudeBot (Anthropic) — generally respect robots.txt | Some AI startups and smaller crawlers have been reported scraping without permission, ignoring robots.txt entirely |

| The catch | Official bots are identifiable by name | Some crawlers disguise their identity, making them very hard to block |

-

NPR (2024) reported that “AI crawlers are running rampant, ignoring the basic rules of the internet”

-

Cloudflare launched a one-click “AI Scrapers and Crawlers” block toggle in July 2024 — letting site owners technically block bots that ignore robots.txt

4-2. Where Does Legal Protection Stand?

| Region | Current Status |

|---|---|

| 🇪🇺 EU | DSM Directive Article 4: If a copyright holder explicitly opts out via robots.txt, it carries legal weight. Under the EU AI Act (passed 2024), GPAI providers must honor machine-readable opt-outs starting August 2025 |

| 🇺🇸 US | No unified federal law. Courts have declined to recognize robots.txt as a technical protection measure, but copyright and contract violation cases are moving forward (NYT v. OpenAI, Reddit v. Anthropic, etc.) |

| 🇰🇷 South Korea | AI Basic Act (effective Jan 2026) introduces high-risk AI regulation and explainability requirements. No explicit rules on crawling yet, but copyright law may apply |

| 🇯🇵 Japan | Copyright Act Article 30-4 broadly permits data use for AI training, except where it “unreasonably harms the interests of the copyright holder” |

4-3. So What Should You Actually Do?

NOTE

Real protection = layering your defenses

- robots.txt + ai.txt + llms.txt: Your minimum on-the-record statement (valuable as evidence in disputes)

- Add AI crawling restrictions to your Terms of Service: Creates a contract violation claim

- WAF (Web Application Firewall) rules: Use Cloudflare and similar tools to technically block AI bots

- Log monitoring: Track and record which bots are accessing your site

- Licensing agreements: Negotiate content use deals with major AI companies (see: Reddit–Google)

robots.txt alone isn't enough. But there's a meaningful legal difference between “doing nothing” and “making your position clearly known.” Especially in the EU, that distinction already carries legal weight.

5. Has AI Already Scraped Your Site? 5 Ways to Check

Setting a robots.txt rule is about preventing future crawling. But what about crawling that's already happened? Here are practical methods anyone — even a solo creator or small team — can use.

① Server Log (Access Log) Analysis

-

Difficulty: ⭐⭐ (requires basic server knowledge)

-

Cost: Free

-

The most reliable method. Your web server (Apache, Nginx, etc.) keeps access logs that record which bots visited, when, and which pages they hit.

User-Agents to look for:

| Bot | Company | Purpose |

|---|---|---|

GPTBot | OpenAI | Training data collection |

ChatGPT-User | OpenAI | Real-time browsing |

ClaudeBot | Anthropic | Training data collection |

Google-Extended | Gemini training | |

PerplexityBot | Perplexity | AI search & answer generation |

CCBot | Common Crawl | Open dataset (used in many AI models) |

Bytespider | ByteDance | Training data collection |

Terminal commands to check:

# Find AI bots in Nginx logs

grep -iE “GPTBot|ClaudeBot|Google-Extended|PerplexityBot|CCBot|Bytespider|ChatGPT-User” /var/log/nginx/access.log

# Count how many times each AI bot visited

grep -ioE “GPTBot|ClaudeBot|Google-Extended|PerplexityBot|CCBot|Bytespider” /var/log/nginx/access.log | sort | uniq -c | sort -rn

NOTE

If you're on a hosted platform (Vercel, Netlify, etc.), you can filter User-Agents in your dashboard's Analytics or Functions logs. If you're using Cloudflare, the free AI Audit feature lets you visualize AI bot traffic at a glance.

② Ask the AI Directly

-

Difficulty: ⭐ (anyone can do this)

-

Cost: Free

-

Ask ChatGPT, Claude, Gemini, or Perplexity specific questions about your site. It's a quick indirect check to see whether the AI already “knows” your content.

How to test:

-

Ask: “Do you know anything about knowai.com?”

-

Search for a unique phrase that only exists on your site

-

Ask it to summarize one of your blog posts

WARNING

NO — Just because an AI “knows” your content doesn't mean it directly crawled your site. It may have learned from a public dataset like Common Crawl. Likewise, “I don't know your site” is no guarantee your content wasn't used — it may have been trained on it without surfacing it in that particular response.

③ Online Crawler Access Checkers

-

Difficulty: ⭐ (anyone can do this)

-

Cost: Free

-

If you don't have server log access, third-party tools can check your robots.txt configuration and whether AI bots can currently access your site.

Recommended tools:

-

MRS Digital AI Crawler Access Checker: Enter your domain and instantly see whether GPTBot, ClaudeBot, PerplexityBot, etc. are blocked

-

Cloudflare AI Audit (free plan included): If you're on Cloudflare, see which AI bots visited and whether they followed your robots.txt

-

Cloudflare “AI Scrapers and Crawlers” one-click block: Available even on free plans — one toggle blocks all AI bots

④ Search Public Training Datasets

-

Difficulty: ⭐⭐ (requires some research skills)

-

Cost: Free

-

Many AI models are trained on Common Crawl, a massive public web archive. If your site is in that archive, it's very likely been used in AI training.

NOTE

What is Common Crawl?

A U.S.-based 501(c)(3) nonprofit founded in 2007 by Gil Elbaz — fewer than 10 employees. Every month, CCBot collects billions of publicly accessible web pages and makes the archive freely available on Amazon S3. The mission: democratize web data as a public good.

OpenAI (GPT), Google (Gemini), Meta (LLaMA), Anthropic (Claude) — virtually every major AI company has used this data for LLM training. In 2025, however, The Atlantic reported that Common Crawl had been collecting paywalled articles and effectively giving AI companies a backdoor into premium content — sparking significant controversy.

How to check:

-

Common Crawl Index (index.commoncrawl.org): Search your domain to see which pages were collected and when

-

Have I Been Trained? (haveibeentrained.com): Check whether your images appear in AI training datasets like LAION — especially useful for visual creators

⑤ Behavioral Bot Detection

-

Difficulty: ⭐⭐⭐ (requires development knowledge)

-

Cost: Free to paid

-

To catch bots disguising their User-Agent, you need to go beyond log analysis and look at behavioral patterns.

Detection signals:

-

Abnormal request patterns: Dozens or hundreds of pages requested sequentially in a short window

-

No mouse or scroll activity: Simulates a browser but has no user interactions

-

No JavaScript execution: Fetches raw HTML via simple HTTP requests only

-

Repeated full-site scans from the same IP

Tools:

-

Cloudflare Bot Management: ML-based detection that catches disguised bots too

-

Server logs + a simple script: A basic Python or Bash script aggregating request frequency, pages per IP, and User-Agent patterns can catch the obvious offenders

NOTE

For solo creators and small teams, combining ①–④ is the most realistic approach.

- Cloudflare's free plan gives you AI bot traffic visibility + one-click blocking

- Server logs (checked periodically) show you which bots are showing up

- Asking AI directly is the fastest way to gauge your content's exposure

- Common Crawl Index tells you if you're already in a major public training dataset

That said: there is no way to definitively prove whether AI has trained on your data. AI companies don't publish training data lists, and the technology to trace a specific dataset's contribution to a model's outputs is still in research.

6. Practical Checklist

Step 1: Define Your AI Content Policy

-

Define what content AI may use (e.g., public blog, help docs, open documentation)

-

Define what content AI may not use (e.g., paid courses, member-only content, personal data)

Step 2: Create and Upload ai.txt

-

Draft your ai.txt using a template

-

Save in UTF-8 encoding

-

Upload to your site root → verify at

https://yourdomain.com/ai.txt -

Cross-check for conflicts with robots.txt

Step 3: Create and Upload llms.txt

-

Write H1 title + blockquote summary + section-by-section link list

-

Verify all links are live (no 404s)

-

Confirm it's accessible at

https://yourdomain.com/llms.txt

Step 4: Set Up a Regular Update Process

-

Update ai.txt / llms.txt whenever your Terms of Service or Privacy Policy changes

-

Always update the

Last-Updateddate

7. GEO (Generative Engine Optimization) Basics

7-1. What Is GEO?

GEO (Generative Engine Optimization) is the practice of optimizing your content so it gets discovered and cited more often by AI-powered search engines like ChatGPT, Claude, Gemini, and Perplexity.

-

Traditional SEO = optimizing for Google/Bing rankings (keyword- and backlink-focused)

-

GEO = getting AI to choose your content as a source when generating answers (context- and credibility-focused)

-

Related terms: AEO (Answer Engine Optimization), AI SEO

7-2. SEO vs. GEO: Core Differences

| Category | SEO | GEO |

|---|---|---|

| Optimizes for | Google, Bing, etc. | ChatGPT, Perplexity, etc. |

| Output format | A list of links (the classic “blue links”) | A synthesized AI-generated answer (+ source citations) |

| Key factors | Keywords, backlinks, domain authority | Context understanding, information credibility, structured data |

| Content style | Keyword-focused | Quantitative, specific, context-rich |

| User behavior | Search → click → visit site | Ask → get AI answer → site visit optional |

7-3. How AI Evaluates “Good Content”

-

Semantic understanding: Grasps context and intent, not just keywords

-

Information synthesis: Combines multiple sources into a comprehensive answer

-

Credibility assessment: Evaluates accuracy and source trustworthiness

-

Recency weighting: More recent content gets higher weight

7-4. Core GEO Strategies

- Write structured content

- Use a clear heading hierarchy (H1–H3)

- Lean into Q&A format

- Lead with the most important information (inverted pyramid)

- Be specific and quantitative

- ❌ “We have exceptional technical capabilities.”

- ✅ “We reduced monthly energy consumption by 18% as of 2024.”

- Build E-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness)

- Clearly identify authors and their credentials

- Cite data, statistics, and research

- Always link to your sources

- Build the technical foundation

robots.txt: Allow AI bot crawlingai.txt: State your AI usage policyllms.txt: Provide a prioritized document list- Use structured data (Schema.org) markup

- Build presence across platforms

- Get your brand mentioned on Wikipedia, LinkedIn, and industry forums

- Earn citations from a variety of trusted sources

Today's Takeaways

-

Crawling is how search engines and AI read the web. In the AI era, you need to think about both protection and opportunity at the same time

-

robots.txt (entry rules) + ai.txt (usage policy) + llms.txt (AI site guide) give you layered protection

-

Legal enforcement is still limited, but making your position clear on record can be decisive in a dispute

-

GEO is the emerging strategy for getting your content cited in AI-generated answers

-

Perfect protection isn't possible — but doing nothing and being prepared are two very different places to be

NOTE

NOW — Start right now

- Open your site's

robots.txtand check whether you have any rules for AI bots - Search your domain on Common Crawl Index

- Ask ChatGPT or Claude: “[your site] — do you know anything about it?”

Just doing these three things will give you a clear picture of how AI currently sees your site.